Socratic questioning model.

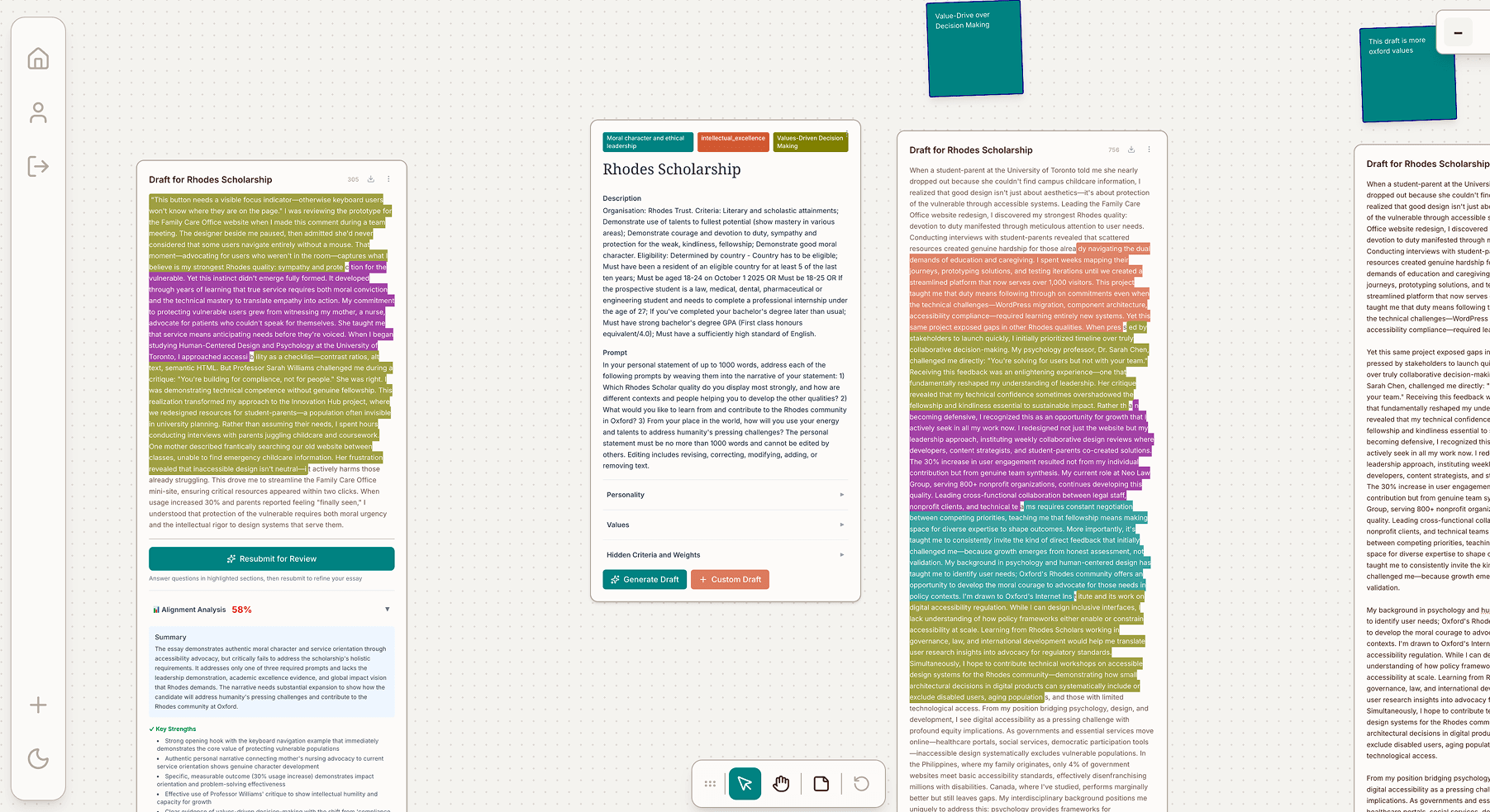

Instead of writing for users, the AI asks questions that guide them to articulate what's already there — making the output authentically theirs.

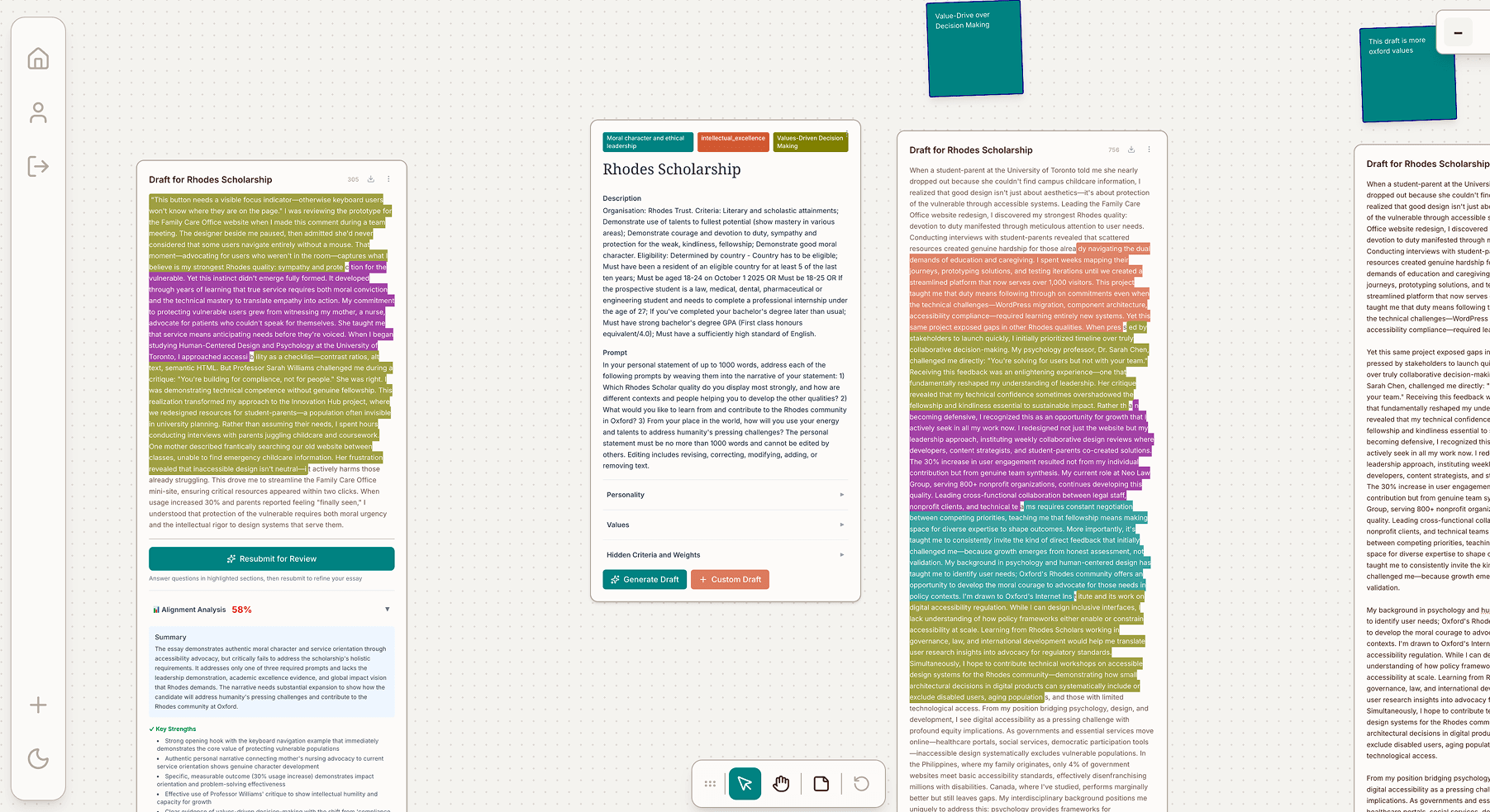

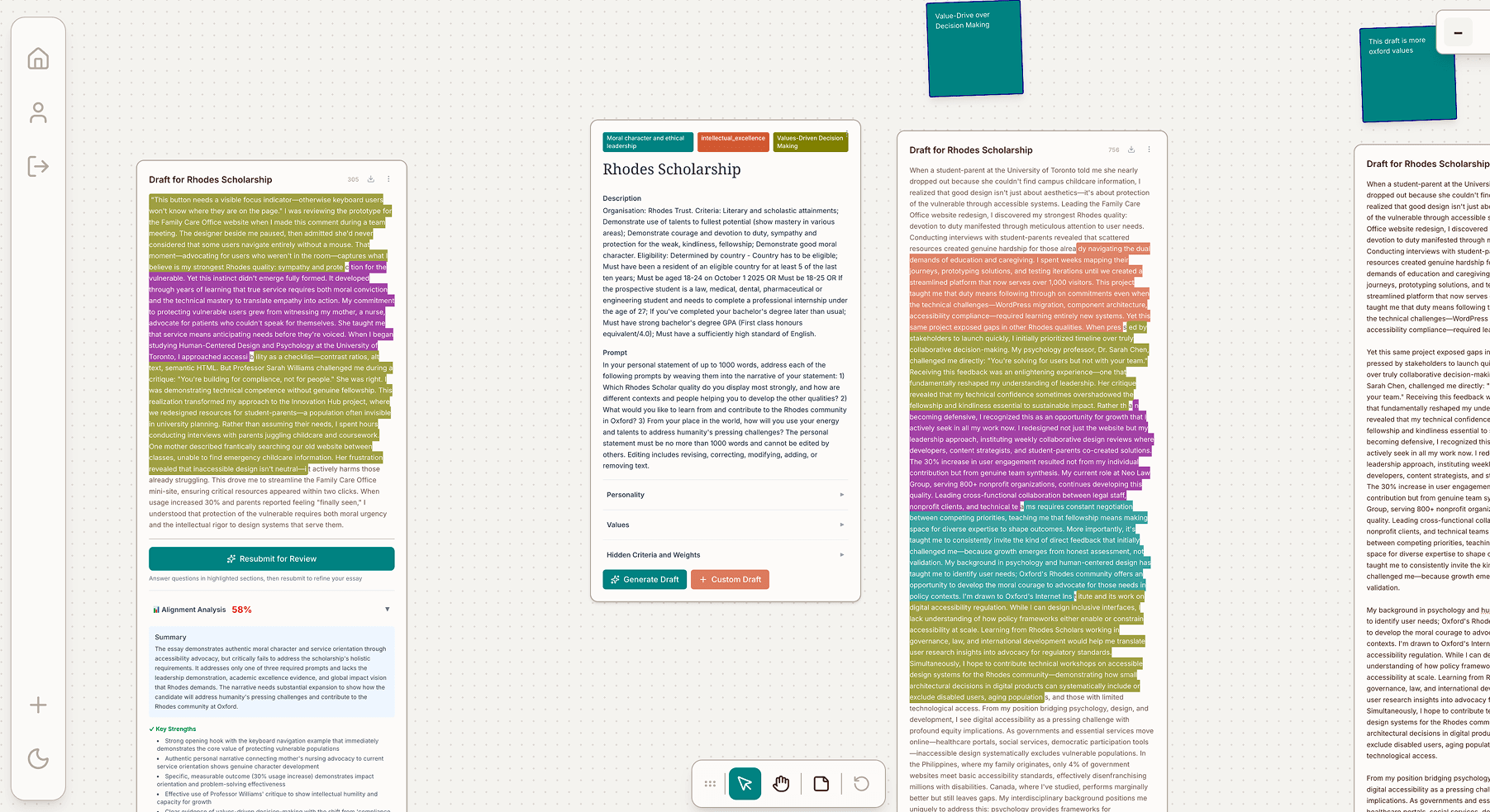

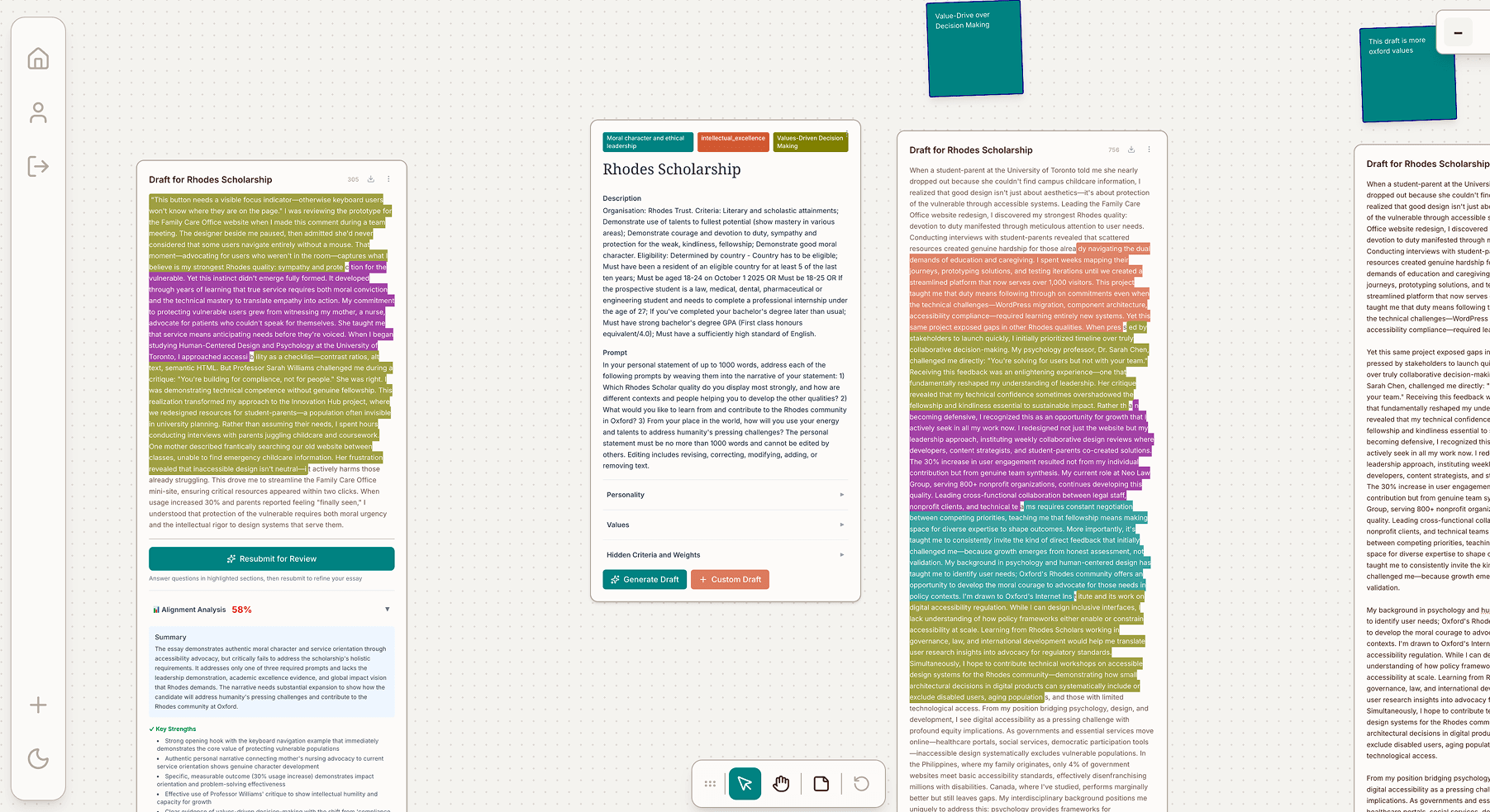

Socratic.ai is a platform that helps students draft scholarship essays, revealing hidden criteria behind scholarship essay prompts.

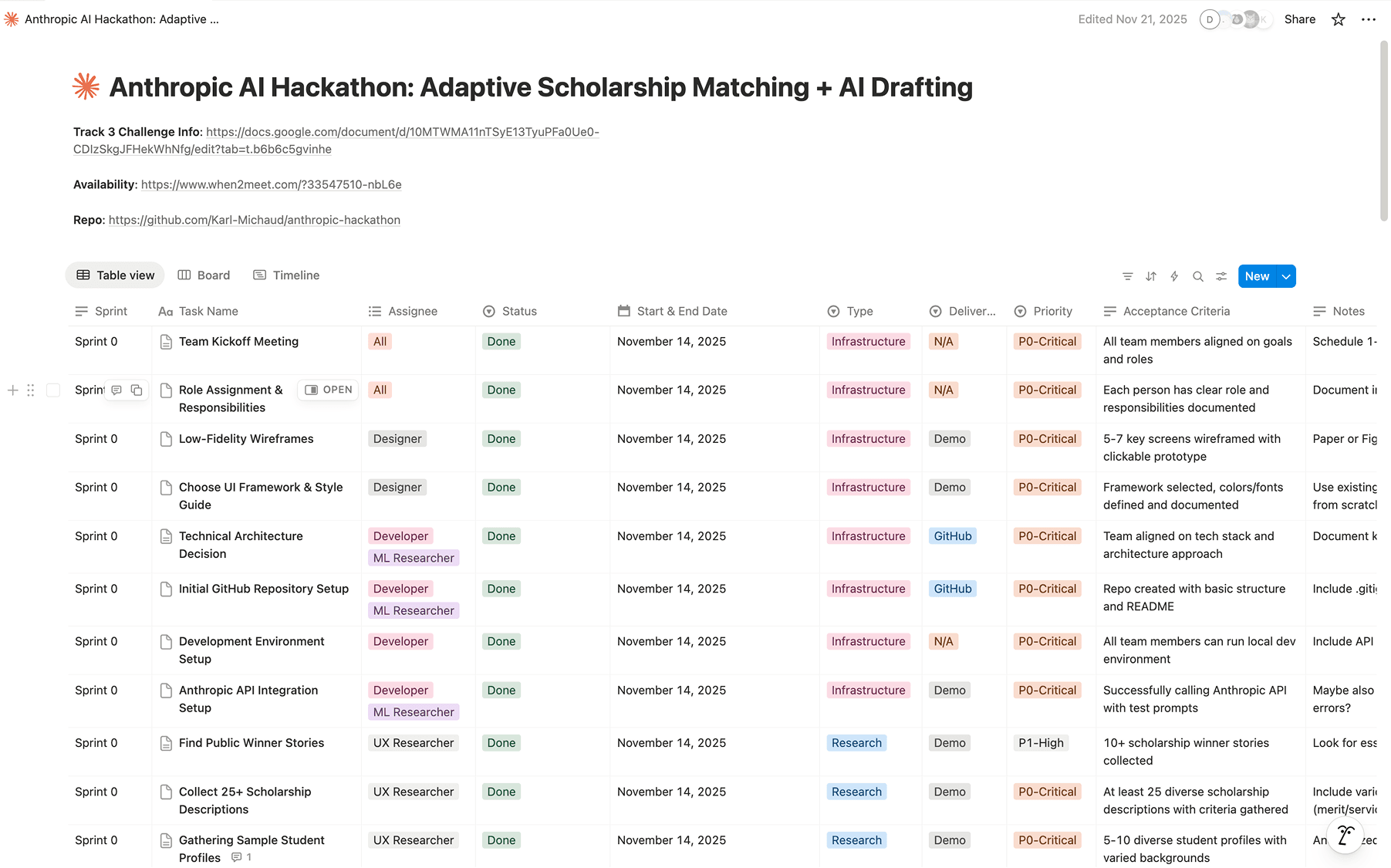

The result is a vector canvas-based platform powered by the claude API for multiple drafts organized visually, insight from scholarship winning drafts, and AI interaction that feels more like a conversation.

Generic AI writing tools produce generic outputs because they skip the thinking that makes writing personal. For scholarship essays especially, the quality of the thought behind the words determines the outcome — not the polish.

Chat interfaces compound this with a black box experience: users can't see the reasoning, can't compare alternatives side by side, and can't organize their thinking spatially the way writing actually works.

How do you make AI collaboration feel transparent and generative, not prescriptive? And how do you ship it in 7 days?

Instead of writing for users, the AI asks questions that guide them to articulate what's already there — making the output authentically theirs.

Writing is non-linear. The canvas lets users place, compare, group, and navigate multiple drafts at once, the way thinking actually works.

We found that the root cause isn't the AI, but a fundamental mental model mismatch between how AI chat works and how writing actually happens in practice.

This produces three failure modes in chat-based AI writing tools — and all three pointed to the same solution: give users more agency over the process.

The core of Socratic.ai is a Socratic questioning model: instead of writing for users, the AI asks targeted questions that help them articulate what's already there. The output is authentically theirs because the thinking was theirs.

We replaced the chat thread with a vector canvas that lets users place, compare, group, and navigate their ideas the way writing actually works — non-linearly, spatially, iteratively.

Scholarship prompts are designed to be open-ended — but winning scholarship essays carry hidden criteria that committees never write down. Socratic.ai surfaces those criteria during the questioning process — so users write toward them without being told what to say.

Socratic.ai makes AI collaboration transparent and generative — not prescriptive. The Socratic questioning model surfaces authentic voice. The canvas gives writers spatial control over their thinking. The reasoning panel makes AI logic visible and evaluable.